Most people who build machine learning models are not in the training data.

The dataset is collected from somewhere else - scraped from the internet, labeled by contractors, gathered from sensors. The person building the model is a step removed from the data. They work with it, shape it, evaluate it, but they are not in it.

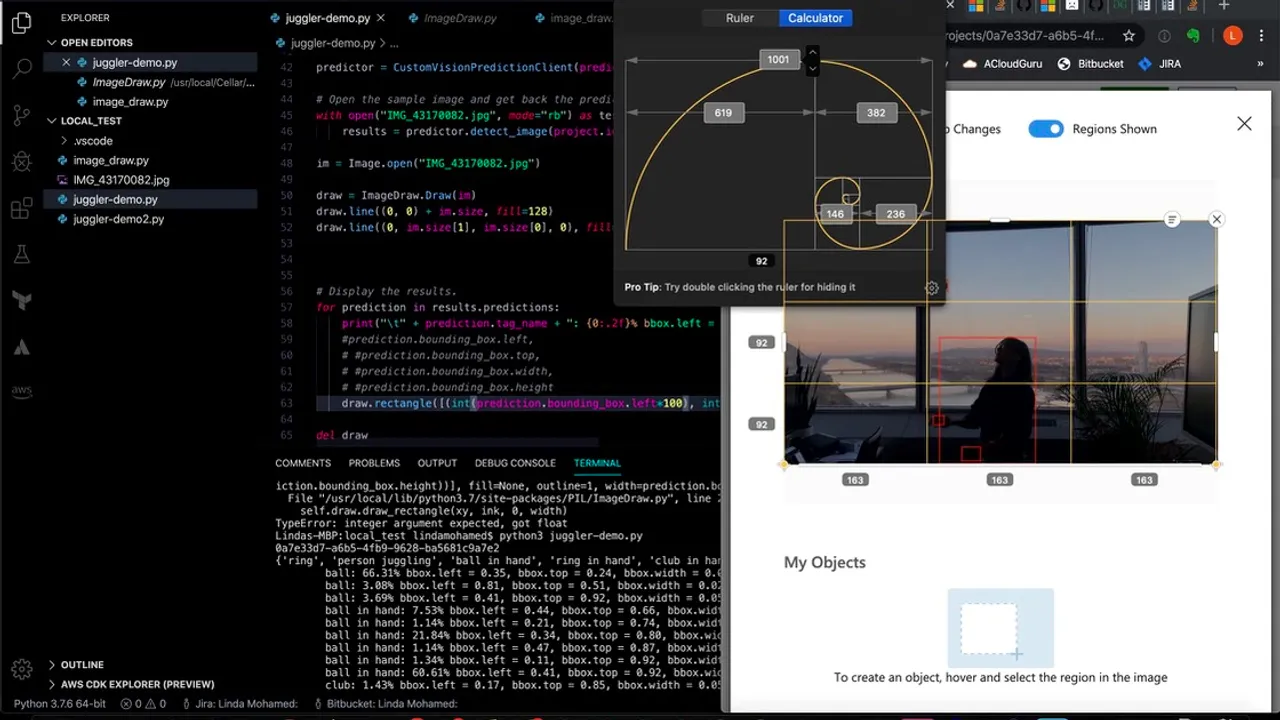

When I built OTTO, I was the dataset.

Every frame the model trained on was a frame of me juggling. Every labeled bounding box was drawn around my body, my arms, my throws. The model learned what a juggling throw looks like by learning what my juggling throw looks like. This created something interesting and something to be careful about.

What the model learned about me specifically

A computer vision model trained on one person’s throwing technique will learn that person’s technique.

My throws have a specific arc. My hands move in a way that reflects my particular body mechanics and the props I use. The model learned these things. It became, over many training iterations, quite good at detecting the specific kind of throw I make in the conditions I labeled.

This is useful for a system designed to track one specific performer. It is a problem for a system meant to generalize.

The failure mode appeared when I showed the model throws from other jugglers. The predictions degraded - not completely, but noticeably. The model had learned “juggling” with my body’s definition of the word. Other jugglers threw differently enough that the model was less confident.

The personalization-generalization tradeoff

Every model trained on specific data faces this tradeoff.

| Juggling | Machine Learning |

|---|---|

| Trained on my specific throwing arc | Model trained on one writing style |

| Accurate on my throws, uncertain on others' | Good at one person's patterns, worse on others' |

| Adding other jugglers' footage improves generalization | Adding diverse training examples reduces overfitting |

| Enough data to be useful for one performer | Enough data for the intended deployment context |

The question is whether the intended use case is fine with that specificity or requires broader generalization. For OTTO, the question was: is this for tracking one performer, or for general juggling detection? The answer changes everything about how the training data needs to be structured.

What it is like to label your own body

There is something specific about annotating frames of yourself that would be worth noting to anyone who tries it: you start noticing things about your own technique that you had not seen before.

When you draw a bounding box around your own throw a thousand times, you notice that your right arm is lower than your left at release. You notice that your throws cluster to the right when you are tired. You notice your hand position changes between a standard throw and one you make when you are about to lose the pattern.

The labeling process was also, unexpectedly, a practice session. Looking at yourself from the outside - frame by frame, isolated from the flow - is a different kind of feedback than the usual throw-catch-throw.

The labeling process was also, unexpectedly, a practice session. Looking at yourself from the outside - frame by frame, isolated from the flow of the juggling - is a different kind of feedback than the usual throw-catch-throw. It is the kind of attention that is hard to sustain during performance but that reveals things that performance cannot.

The model knows your patterns before you do

By the end of the labeling and training process, the model had a very accurate map of my throwing patterns. It could predict, with high confidence, where the ball would appear in the next frame.

This raises a question worth sitting with: if a model trained on your behavior can predict your next move with high accuracy, what does that tell you about how predictable your patterns are? And when predictability is a limitation - when you need to be less legible to the system, or more varied in your approach - what does changing your training data actually require?

You have to throw differently. The model learns from what you do.

The bias I accidentally built

There was a more uncomfortable finding that took longer to surface.

The model worked reliably on one specific type of person: a short woman juggling the way I juggle. Not just “juggling” - my technique, my body proportions, my arc height relative to my frame. The moment I tested it on anyone else, the confidence dropped. On tall men, on men in general, the model essentially failed. It did not just perform worse. It did not recognize what it was seeing as juggling at all.

It even had trouble with me when I looked different. My hair changes constantly. When the visual signature shifted enough - different length, different color, different style - the model sometimes failed to confirm that the person juggling was a juggler. It had learned a composite: my technique plus something about my appearance, collapsed together into a single learned representation.

This is usually how the bias conversation goes in the other direction. Most large training datasets contain more data from men than from women - particularly in professional and technical domains. The result is systems that work well on men and fail on women. I accidentally built the exact inverse: a model that worked on one short woman and discriminated against everyone else, including every man who tried it.

The architecture of the failure was identical in both cases. The data reflected the collector. The model learned the collector’s world. The people outside that world were invisible to it.

AI reflects the data we have. Most of the time, the data we have reflects who collected it. The bias is not in the algorithm. It is upstream.

The reason I find this useful to talk about is not the failure itself but the method that revealed it. I did not run an audit. I did not read a paper. I built a thing, tested it on different people, and watched it fail in a specific and informative way. The failure taught me something about bias in AI systems that no amount of reading had made concrete for me before.

This is how I learn about architecture and technology: by building something real, putting it in front of conditions it was not designed for, and observing what breaks and how. The implication is in the failure pattern. The lesson is in the specificity of what did not work.

I trained a model that could not see tall men. I now understand dataset balance in a way that is not abstract.

Read next: 99 Percent - the training metric that took weeks to reach and what it actually measures.