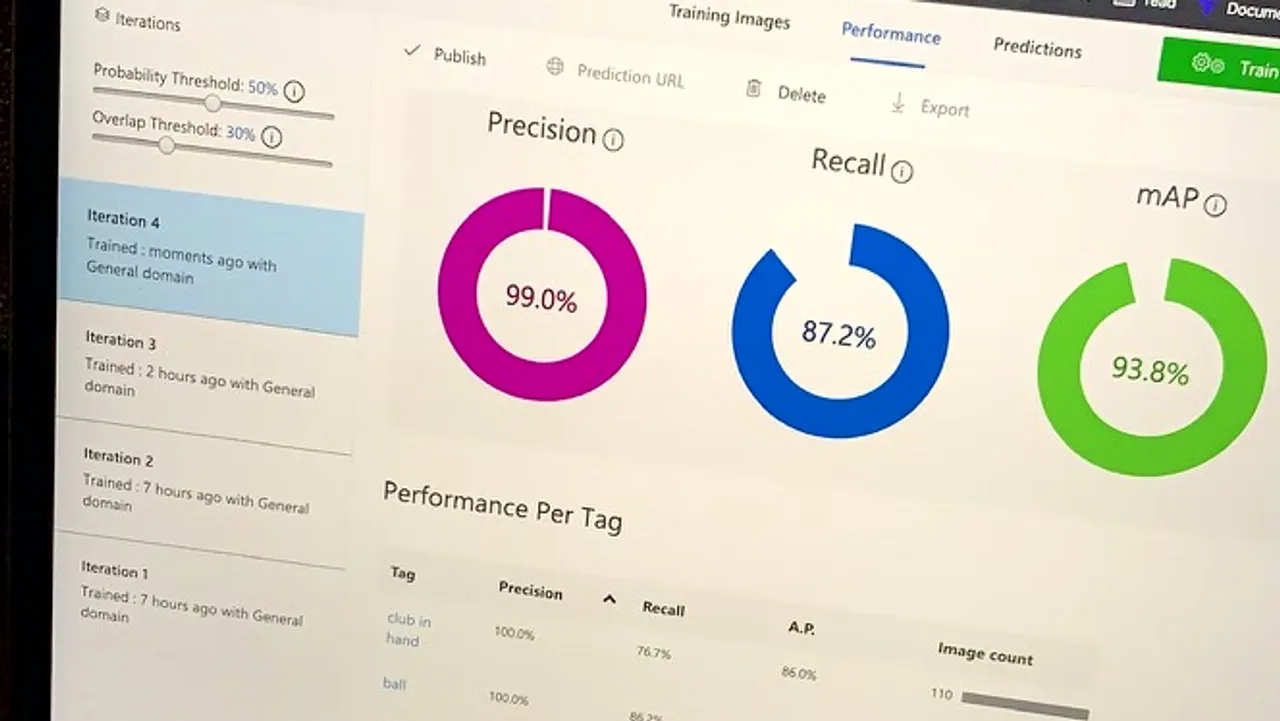

The number on the screen is 99 percent.

It took weeks to reach it - weeks of labeling frames, retraining the model, evaluating on held-out data, finding the categories where precision was low, labeling more examples in those categories, retraining again. The loop ran until the number stopped improving meaningfully and stabilized at 99.

This is what a training result looks like: a number on a screen that summarizes weeks of work and means exactly one thing, precisely defined, and does not mean several other things that are easy to assume it means.

What precision means

Precision answers one question: of all the times the model said it found the target, what fraction was it right?

A model with 99 percent precision is almost never wrong when it reports a detection. When it draws a bounding box and labels it “juggling ball,” there is a 99 percent chance there is actually a juggling ball there.

The other metric that matters is recall: of all the juggling balls that were actually in the frames, what fraction did the model find? Precision and recall are in tension. Pushing one higher typically pushes the other lower.

Why 99 percent feels like a finish line

There is a specific experience of seeing a metric reach a number that sounds like completion. 99 percent sounds like it is practically 100 percent, which sounds like done.

99 percent is a real number. It is earned. It also reflects the training distribution more than it reflects the world.

It is not done. It is a milestone in a loop.

The model was trained on specific data, in specific conditions, at a specific point in time. It performs at 99 percent precision on the evaluation set, which was sampled from the same distribution as the training data. The same model, deployed on footage from a different venue with different lighting, or on a juggler with different technique, or on props of a different color, will perform at a different precision.

The gap between those two things is what deployment teaches.

The number as a starting point

99 percent was not the end of the project. It was the point at which the model was good enough to deploy into a test environment and see what happened when it encountered real-world conditions it had not trained on.

The failures in that phase were instructive in a way the training metrics could not be. Frames where precision dropped told me specifically what the model had not seen enough of - specific angles, specific distances, specific lighting conditions. Each failure was a training example I had not yet added.

The loop continued: deploy, observe failure cases, label them, retrain, redeploy. This is the MLOps loop in practice. Not as a theoretical framework but as the actual daily work of keeping a model performing in the world it is deployed in rather than the world it was trained on.

Where the work actually happens

This kind of model training does not require a lab or a server room. It runs on a laptop, in an ordinary working environment, alongside everything else.

Building a custom AI model is no longer specialized work that requires a research team. It is work that happens on a laptop, in the same environment as everything else. The tools are accessible. The difficulty is not in accessing the tools - it is in building the dataset, running the loop enough times, and understanding what the metrics actually mean.

Read next: Being the Training Data - what it means to label frames of yourself.