The question that started the project was simple: can a machine learn to recognize a juggling throw?

Not in a laboratory setup with controlled lighting and a fixed camera. In real conditions - different backgrounds, different lighting, different throws. The kind of variation that makes object detection interesting and hard.

The tool was Azure Custom Vision. The dataset was me - throwing, catching, juggling in different spaces. The labeled training data was built frame by frame: here is the ball, here is the arc, here is what a throw looks like at the moment of release.

What Custom Vision required

Training a Custom Vision model is a loop - the same loop as juggling practice, applied to machine learning.

You start with a few labeled examples and train. The model makes predictions. You review what it got right and what it missed. You add more examples in the categories where it is failing - different angles, different lighting, edge cases the model has not seen. You retrain. You evaluate again.

The model improves not because it is getting smarter in any general sense but because the training data is covering more of the space of real-world variation. Each round of labeling and retraining is a correction cycle - the same feedback loop as adjusting your throw when the ball keeps going too far left.

Testing in the field

The real test is always the one outside the training environment.

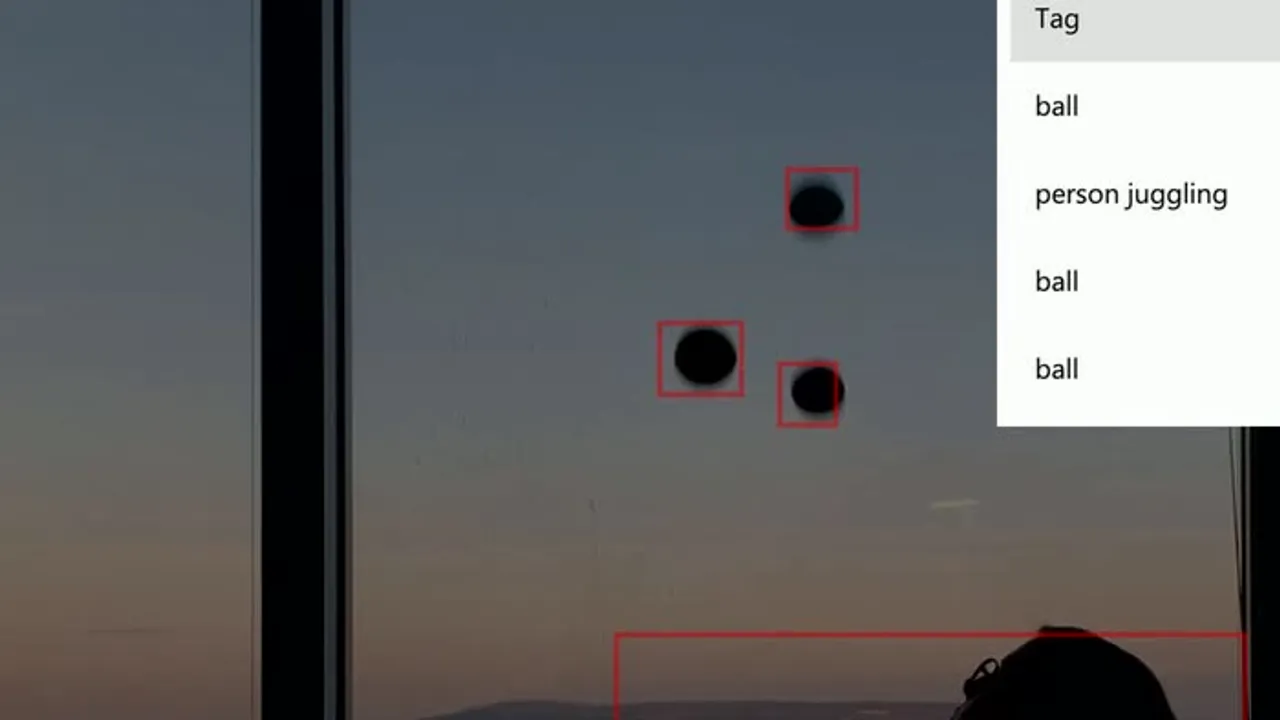

The Vienna test was the first time the model ran on video I had not used in training - in a space it had not seen, with lighting conditions it had not been calibrated on. The bounding boxes drawn over the frame are the model’s output in real time: here is where I think the juggler’s body is, here is where I think the ball is.

The model worked. Not perfectly - edge cases and fast motion still produced misses. But the precision was high enough to be useful, and more importantly, to be improvable. The error cases were informative: specific angles, specific distances, specific lighting conditions where the model needed more training examples.

What the 99 percent means and does not mean

High precision means that when the model says it found something, it is usually right. It does not mean the model finds everything.

High precision means that when the model says it found something, it is usually right. It does not mean the model finds everything. A model can have 99 percent precision and still miss many throws - it is just being conservative, only flagging what it is very confident about.

This tradeoff between precision and recall is one of the first things anyone building a detection system has to understand. The right balance depends entirely on what you are building it for. For juggling detection in a performance context, you probably want high recall - find every throw. For a system that triggers an alarm, you want high precision - do not fire unless very confident.

The feedback loop never ends

The model reached 99 percent precision, and that was the milestone for this phase of the project. But the loop does not stop there.

Every new environment, every new performer, every new kind of prop the model encounters is new training data. A model trained entirely on one juggler’s throws in one kind of space will degrade when deployed in a fundamentally different context. The loop continues: deploy, monitor, identify failure cases, retrain.

The cascade pattern applies to MLOps just as it applies to juggling practice. You do not reach a level and then stop. You reach a level and that level becomes the floor you build from.

Read next: Being the Training Data - what it means when the developer and the dataset are the same person.